Yes a few people wrote papers looking a tachyonic/superlumminal in the 1990s after experiments measuring the mass squared of a neutrino from tritium decay. Tritium decays with a fixed total energy into a neutrino an electron and helium-3, by the measuring the maximum energy of the electron and subtracting, you get the minimum energy of a neutrino and thus its mass. It turned out the neutrino actually seemed to have negative squared mass, (tachyonic), but this was totally within the error bars,e.g. m_{nu}^{2}=-0.67+/- 2.53 {eV}^{2}, at the Troitsk experiment. The error bar is much bigger than the data point, so it was pretty much ignored. But a number of papers where written, and several other experiment also showed negative squared mass data points.

Neutrinos have also been looked at for tachyonic behaviour because of the chiral nature. Since there only left handed neutrino, and right handed anti-neutrino (that a known so far, experiment might find sterile revered version of the known ones). This means that when the vacuum creates a neutrino anti-neutrino pair, back to back emission so opposite momentum, that the total has a spin 1. For any non-chiral particles the spin could be zero, meaning that the vacuum would decay, if the particle could exist with negative energy. But the chiral nature of the neutrino means this can't happen, and the vacuum is safe even with

tachyonic neutrinos.

The small tachyonic masses (can I say measured, that would be wrong, clearly any random measurement around zero, would find a negative number half the time), however don't match

the -(120 MeV) squared needed to fit the OPERA result using tachyonic neutrino, nor can

it be the result of oscillating to a much more negative massed sterile state with such a large imaginary mass, since the faster than light measurement was for all the recorded particles.

They are probaby talking about supernovae, like how SN1987A was first detected by neutrinos before the light arrived. In that case neutrinos and photons are both produced in the core of the supernovae explosion, but they have dense clouds of gas to get through before they get to empty space and travel freely to us. Since the neutrinos are weakly interacting they can pass through the gas cloud much more easily than the photons and so break free earlier. In a fair race photons beat neutrinos (this was confirmed when the whole OPERA fiasco got sorted out).

Best Answer

Last (?) Edit: The "problem" is solved: it was mainly a problem in the timing chain, due to a badly screwed optical fibre. A high level description of the problem is given here and a more detailed explanation of the investigation is here.

List of possible systematic biases

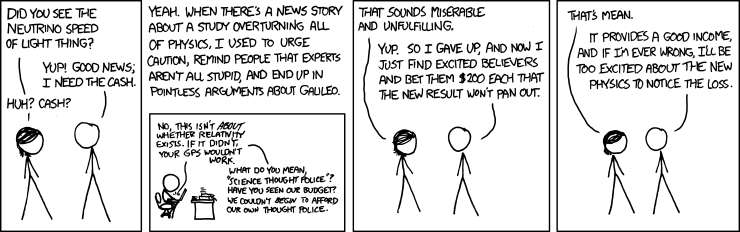

I thought it might be a good idea to list the possible systematic biases which could lead xkcd's character to win his bet. As many physicists (including, I guess, many people from the OPERA collaboration), I think it will end like the Pioneer anomaly. Of course, the current list only contains biases which are unlikely, but less unlikely than a causality violation.

Location errors and clocks drifts

The arXiv paper studied them, and seem to exclude it. The distance seems to be known within 20 cm and the synchronisation seems to be within 15 ns (6.9 statistical and 7.4 systematic). If this would however end up to be the explanation, it would be quite boring.

Update: Rumors seems to tell that the boring explanation is the good one.

Not the same neutrinos detected

The neutrinos are emitted on a 10.5 µs window, 175 times longer than the observed effect. It might be possible that the neutrino emitted early are not exactly the same as the one emitted late. Neutrino oscillation might, for example, then make early neutrino more detectable by the distant detector.

However, the detectors were built to measure the oscillation, so I guess that the OPERA collaboration thought about it, and rejected it for whatever reason. I suppose an explanation along these lines would mean interesting new particle physics.

Update: This possibility excluded by a new experiment with 3 ns pulses.

Errors in the statistical timing analysis

The timing itself is based on a quite elaborate statistical analysis. Furthermore, the pulses are quite long (10 μs), so an error in this analysis could easily be of the good order of magnitude.

Update: This possibility excluded by a new experiment with 3 ns pulses.