Question 1: One of the easiest ways to see this 3-fold intersection condition is in terms of "brick subdivisions" of the square. This is a subdivision of the square into rectangles such that each vertex of the subdivision is a corner of 3-rectangles. Such a subdivision can be taken fine enough to carry out the argument given in Hatcher's book. More details are in

R. Brown and A. Razak Salleh, ``A van Kampen theorem for unions of non-connected spaces'', Archiv. Math. 42 (1984) 85-88.

which relates this argument to the Lebesgue covering dimension. Also by using the fundamental groupoid $\pi_1(X,A)$ on a set $A$ of base points, it gives a theorem for the union of non-connected spaces.

I also prefer the argument in terms of "verifying the universal property" rather than looking at relations defining the kernel of a morphism. To see this proof in the case of the union of 2 sets, see

https://groupoids.org.uk/pdffiles/vKT-proof.pdf

One of the problems in the proof for the fundamental group as given by May is that it does not generalise to higher dimensions.

Question 2: One has to ask: where do such unions of non-connected spaces arise? The standard answer, and for me from which all this groupoid work arose, is to obtain the fundamental group of the circle $S^1$, which is after all, THE basic example in algebraic topology. Many other examples arise in geometric topology.

More answers come in applications to group theory; for example the Kurosh subgroup theorem on subgroups of free products of groups can be proved by using a covering space of a one-point union of spaces. The cover over each space of this union has usually many components. I confess I have not seen the minimal condition of 3-fold intersection used in practice, but it is always interesting to know the minimal conditions for a theorem. Just as well to know one can't in general get away with 2-fold intersections.

You can see the consistent use of groupoids in $1$-dimensional homotopy theory (van Kampen theorem, homotopy theory, covering spaces, orbit spaces) in my book Topology and Groupoids (2006) available from amazon.

Edit Feb 17, 2014:

It may be useful to point out that a sharper result than that in May's book was published in 1984 in the above Brown-Razak Salleh paper; the idea of proving a pushout result for the full fundamental groupoid, and then retracting to $\pi_1(X,A)$, is in my 1967 paper, and subsequent book, but Peter May cleverly makes it work for infinite covers; the problem is that this proof does not generalise to higher dimensions, as far as I can see.

Also Munkres' book on "Topology" uses non path connected spaces in dealing with the Jordan Curve Theorem, and for this uses covering space rather than groupoid arguments. I do not see why books avoid $\pi_1(X,A)$, since it hardly requires any extra in the proofs to that for $\pi_1(X,a)$, given the easy definition.

Edit Feb 20, 2015: A relevant question and answer is https://mathoverflow.net/questions/40945/compelling-evidence-that-two-basepoints-are-better-than-one

Some of the relevance to the history of algebraic topology, and to further developments, is in this presentation in Galway, December, 2014. See also my preprint page.

March 5, 2015

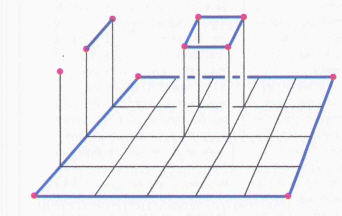

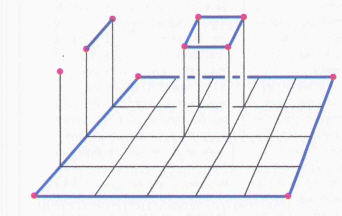

I feel this "perturbation (or deformation) trick" is of quite a fundamental nature. Above is a picture of part of a deformation involved in the proof of the 2-dimensional Seifert-van Kampen type theorem, for the crossed module involving a triple $(X,A,C)$ of spaces where $C$ is a set of base points, and $C \subseteq A \subseteq X$.

The red dots denote points of $C$.

The blue lines denote paths lying in $A$. The small squares in the bottom are supposed to denote squares lying in a set $U$ of an open cover. By making connectivity assumptions you can deform a small bottom square into the top one of the right kind, and still lying in the same open set. So, by working with a double groupoid construction $\rho_2(X,A,C)$ whose compositions are more 2-directional than the usual relative homotopy groups, you can get going with the proof of a universal property involving double groupoids, and hence for crossed modules.

We use the Seifert Van-Kampen Theorem to calculate the fundamental group of a connected graph. This is Hatcher Problem 1.2.5:

It is a fact in graph theory that any connected graph $X$ contains a maximal tree $M$, namely a contractible graph that contains all the vertices of $X$. Now if the maximal tree $M = X$, then we are done because for any $x_0 \in M$, $\pi_1(M,x_0) = \pi_1(X,x_0) = 0$ that is trivially free. Now suppose $M \neq X$. Then there is an edge $e_i$ of $X$ not in $M$. Observe that for each edge $e_i$ we get a loop going in $M \cup e_i$ about some point $x_0 \in M$. Now fix out basepoint $x_0$ to be in $M$ and suppose that the edges not in $M$ are $e_1,\ldots,e_n$. Then it is clear that

$$X = \bigcup_{i=1}^n \left(M \cup e_i\right).$$

The intersection of any two $M \cup e_i$ and $M \cup e_j$ contains at least $M$ and is path connected, so is the triple intersection of any 3 of these guys by the assumption that $X$ is a connected graph. So for any $x_0 \in M$, the Seifert-Van Kampen Theorem now tells us that

$$\pi_1(X,x_0) \cong \pi_1(M \cup e_1,x_0) \ast \ldots \ast \pi_1(M \cup e_n,x_0)/N$$

where $N$ is the subgroup generated by words of the form $l_{ij}(w)l_{ji}(w)^{-1}$, where $l_{ij}$ is the inclusion map from $\pi_1((M\cup e_i) \cap (M \cup e_j),x_0) = \pi_1(M \cup (e_i \cap e_j),x_0)$. Now observe that if $i \neq j$ then $M \cup (e_i \cap e_j) = M$ and since $\pi_1(M,x_0) = 0$ we conclude that any loop $w \in \pi_1(M \cup (e_i \cap e_j),x_0)$ in here is trivial. If $i = j$, $l_{ij}$ is just the identity so that our generators for $N$ are just

$$l_{ij}(w)l_{ji}(w)^{-1} = ww^{-1} = 1$$

completing our claim that $N$ was trivial. Now for each $i$, we have that $\pi_1(M\cup e_i,x_0)$ is generated by a loop that starts at $x_0$ and goes around the bounded complementary region formed by $M$ and $e_i$ and back to $x_0$ through the maximal tree. Such a path back to $M$ does not go through any other edge $e_j$ for $j$ different from $i$. It follows that $\pi_1(X,x_0)$ is a free group with basis elements consisting of loops about $x_0 \in M$ as described in the line before.

Best Answer

Reducing the nlab-theorem to tom Dieck's theorem breaks down when one tries to show that the interiors of $\tilde X_0, \tilde X_1$ cover $\tilde X$. At least there is no simple proof - but nevertheless it could be true. Anyway, we do not need it. In fact, tom Dieck's theorem relies on two ingredients:

Theorem (2.6.1) which states a pushout property for fundamental groupoids under the assumption that $X_0$ and $X_1$ are subspaces of $X$ such that the interiors cover $X$.

The existence of a retraction functor $r : \Pi(Z) \to \Pi(Z,z)$ which tom Dieck only defines for path connected $Z$. This works as follows: For each object $x$ of $\Pi(Z)$ (i.e. each point $x \in Z$) we define $r(x) = z$. For the morphisms we proceed as follows: We choose any morphism $u_x : x \to z$ if $x \ne z$ and take $u_z = id_z$= path homotopy class of the constant path at $z$. Given a morphism $\alpha : x \to y$ in $\Pi(Z)$, we define $r(\alpha) = u_y \alpha u_x^{-1}$.

We shall see that 2. can be generalized so that we can prove

Theorem (Seifert - van Kampen). Let $X$ be a topological space and $X_0,X_1\subset X$ be subsets whose interiors cover $X$ such that $X_{01}=X_0\cap X_1$ is path connected. Then for any choice of base point $\ast\in X_{01}$ \begin{matrix}\pi_1(X_{01},\ast) & \to & \pi_1(X_0,\ast)\\ \downarrow&&\downarrow \\ \pi_1(X_1,\ast) & \to & \pi_1(X,\ast) \end{matrix} is a pushout in the category of groups.

Proof. As Tyrone suggested in his comment, for a pointed space $(Z,z)$ let us denote by $\tilde Z$ the path-component of $Z$ containing the basepoint $z$. From Jackson's and your answers we know that for $X_{01}$ path connected and $* \in X_{01}$ we have $\tilde X_{01} := \tilde X_0 \cap \tilde X_1 = X_{01}$ and $\tilde X = \tilde X_0 \cup \tilde X_1$. Note that $X_{01}$ path connected is essential for both equations.

We apply tom Dieck's retraction contruction to $Z = \tilde X$ and $z = * \in X_{01} = \tilde X_{01}$ by first chosing $u_x$ in $\Pi(X_{01})$ for all $x \in X_{01}$ (where of course $u_* = id_*$), then $u_x$ in $\Pi(\tilde X_0)$ for all $x \in \tilde X_0 \setminus X_{01}$ and finally $u_x$ in $\Pi(\tilde X_1)$ for all $x \in \tilde X_1 \setminus X_{01}$. Since $\Pi(X_{01}),\Pi(\tilde X_0), \Pi(\tilde X_1)$ are subcategories of $\Pi(\tilde X)$, this gives us a choice of $u_x$ in $\Pi(\tilde X)$ for all $x \in \tilde X$ providing a retraction $\tilde r : \Pi(\tilde X) \to \Pi(\tilde X,*) = \Pi(X,*)$. We extend it to a retraction $r : \Pi(X) \to \Pi(X,*)$ as follows: Given a morphisms $\alpha : x \to y$ in $\Pi(x)$, then $x,y$ belong to same path component $P$ of $X$. If $P = \tilde X$, we define $r(\alpha) = \tilde r(\alpha)$. If $P \ne \tilde X$, we define $r(\alpha) =id_*$. Consider the restriction $r_{01}: \Pi(X_{01}) \to \Pi(X,*)$. The category $\Pi(X_{01})$ is a subcategory of $\Pi(\tilde X,*)$ and by construction $r_{01}(\Pi(X_{01})) = \tilde r(\Pi(X_{01})) \subset \Pi(X_{01},*)$, i.e. we may regard $r_{01}$ as a map $r_{01} : \Pi(X_{01}) \to \Pi(X_{01},*)$. Next consider the restriction $r_0 : \Pi(X_0) \to \Pi(X,*)$. For the subcategory $\Pi(\tilde X_0) \subset \Pi(X_0)$ we have by construction $r_0(\Pi(\tilde X_0)) = \tilde r(\Pi(\tilde X_0)) \subset \Pi(X_0,*)$. Let $\tilde P_0$ be a path component of $X_0$ different from $\tilde X_0$, i.e. $\tilde P_0 \cap \tilde X_0 = \emptyset$. Then $\tilde P_0 \cap \tilde X = \tilde P_0 \cap \tilde X_1 = \tilde P_0 \cap X_0 \cap \tilde X_1 \subset \tilde P_0 \cap X_0 \cap X_1 = \tilde P_0 \cap \tilde X_0 \cap \tilde X_1 \subset \tilde P_0 \cap \tilde X_0 = \emptyset$. Thus $\tilde P_0 \cap \tilde X = \emptyset$ and therefore by construction $r_0(\Pi(\tilde P_0)) = r(\Pi(\tilde P_0) = \{id_*\} \subset \Pi(X_0,*)$. We conclude $r_0(\Pi(X_0)) \subset \Pi(X_0,*)$, i.e. we may regard $r_0$ as a map $r_0 : \Pi(X_0) \to \Pi(X_0,*)$. Similarly $r$ restricts to $r_1 : \Pi(X_1) \to \Pi(X_1,*)$. Therefore we get a commutative diagram

\begin{matrix}\Pi(X_0) & \hookleftarrow & \Pi_1(X_{01}) & \hookrightarrow & \Pi(X_1)\\ \downarrow r_0 && \downarrow r_{01} &&\downarrow r_1 \\ \Pi(X_0,*) & \hookleftarrow & \Pi(X_{01},*) & \hookrightarrow & \Pi(X_1,*) \end{matrix}

Now the same argument as in the proof of tom Dieck's Theorem (2.6.2) applies.